Reflections on security within a corporate environment

Or, the illusion of generalised intimacy

So, a bit of a different post this week. While I’m obviously not going into detail on the more personal stuff, a couple of things happened recently that, combined, gave me an interesting perspective that I hadn’t actually considered before. This one is not going to cite a lot of psychological research or ideas, although it being me there’ll obviously be some. This post is more exploring some aspects of my view previously, how it’s changed, and reflecting on how we can maybe think differently about things.

(Yes, this is a lower-effort post due to sudden lack of time and energy. Why do you ask? Should get back to my normal stuff from next week, got an interesting topic I want to explore already half-written.)

Inciting incidents

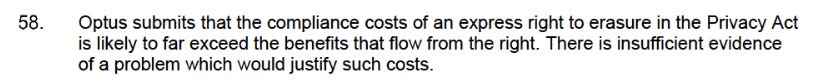

The first thing that happened was the Optus breach. If you somehow missed the event, Optus is a telecommunications company in Australia, a major one. One estimate I saw said that they had records for over 10 million people. Given Australia has a population of somewhere around 25 million… yeah. Major data breach, especially when considering Australia’s very intensive KYC laws which require you to show, and the company to store for several years, basically any form of ID you can think of. This combined with two years ago Optus making a submission to the Australian federal government on proposed changes to the federal privacy legislation that essentially said “deleting this is too hard and there’s no point” (which would have mitigated much of the harm) has led to bad feeling.

There’s been plenty of discussion in the privacy space, in the media and I’m sure among the general populace, given this may have affected roughly half the population. This discussion has been wide-ranging, but a common feeling is outrage, people asking the very reasonable “why do we have to hand over so much information if you’re unwilling to protect it?” and “so why are the laws this way anyway?”. I’m not getting into the details of this breach since they aren’t important for this post; what’s important is it happened, the damage could have been mitigated, and people are angry about it.

The other thing that happened is I picked up a job at a major corporation. I’m obviously not going to tell you which one or what I’ll be doing, but the important details are these:

The work of necessity involves working with deeply personal, even potentially sensitive, information

The work involves contact with a vulnerable population

The work involves engaging with several different technical systems and pieces of software that honestly are kind of confusing to work with and understand

In some rare cases, the speed, accuracy and stability of the systems can make a literal life-and-death difference

In order to learn the various protocols and systems involved, I had to undergo multiple days of training and observation and testing and re-training. To give you an idea, even after my objectively-unusually-high-level of education and learning how to grasp complex, nuanced details of documentation, ethical processes, interacting with software, psychology and security mechanisms, I was regularly left completely flat at the end of the day, with barely enough energy for basic life maintenance tasks like washing dishes or doing laundry. The important thing here is that the learning was intensive and complicated, and given the context of the work, there is extremely little margin for error.

So, that was the context I’ve spent the last few weeks in.

So what?

So when I heard about the Optus data breach, my reaction initially was probably much the same as yours (unless you were caught up, anyway): “well, that’s what happens when you have a lot of personal information and woefully inadequate security standards in place. They should have done better guarding the information they had”. And to a point I still think that’s true – while details are still coming out, objectively they probably could have done better on that side of things.

But I’m less interested in the back-end side of things – encrypted information at rest, secured APIs, permission partitions, network protocols, all that good stuff. Partially because I can’t really parse that terribly well anyway, but mostly because honestly it’s rarely the problem anyway. Almost all of these breaches are at least partially because of some degree of social engineering, whether it be MFA fatigue or the good old “Hey, it’s Bob from IT, what’s your password?” trick that still somehow works, and that’s where I’m looking today.

When being first introduced to the system, I was flabbergasted by how many security or privacy flaws there were, from my inexpert perspective. They run Windows 10 with security updates disabled (although I don’t know if this means they don’t get them, or if they just set it so I can’t accidentally turn receiving them off and they’ve got it set elsewhere), they insist on using either Chrome or Edge, and their password policies were the worst kind of corporate nonsense that sounds good on paper until you actually try to apply them:

Your password needs to be at least 15 characters long

Your password must have at least one lowercase letter, one uppercase letter, one digit and one special character

No character may be used more than three times

You cannot use certain patterns of letters or numbers

(Plus others I’ve forgotten, but I’m sure you get the picture.)

All of these are exactly what you’re told passwords should be – long, random and complex; very hard to guess and practically impossible to brute force. I guarantee you the majority of my passwords would fit quite neatly within that list of restrictions. Except for two major problems:

You must change your password every month, practically guaranteeing people are going to do the “iterate the number at the end” trick thereby basically undercutting the entire purpose

You can’t use a password manager, for both technical (you can’t use a password manager to log into the system to begin with because you can’t access the password manager) and protocol reasons (you cannot have anything near the workstations that might even slightly help you steal the personal information you’re coming into contact with). So you need to remember this password, and change it pretty frequently. Maybe you can manage that, but I certainly can’t. So they’re not going to be random, at best pseudorandom, and probably not even that.

So the system has some glaring holes from a privacy standpoint, the password policies are set up to basically guarantee poor quality passwords that will, practically speaking, rarely if ever change, and the systems are opaque enough that even people who use them every day have poor insight as to how they actually handle the information so detecting leaks is difficult at best. Maybe they have really good back-end stuff to handle this, but I didn’t receive any mention of that. I certainly experienced several technical issues, and while they were mostly fixed pretty quickly, that was largely because I had someone actively there to help, and they were the kinds of things that got attention quickly. So there’s clearly a degree of bodging in the system (as there always is with any kind of living system that changes over time).

Oh, and some of the really crucial systems had – and I’m not kidding here – 4 character usernames and passwords that will never change and are stored in cleartext. In and of themselves they do almost nothing, you need to know how to use them and that’s not exactly intuitive, but still.

On the other side, while I was in training, there was a wide range of people undergoing the same training – young people studying, immigrants from other countries, middle-aged parents, retirees, people looking to make more money while they get their own businesses worked out and off the ground, all sorts. I don’t want to say it’s a representative sample of my city, but it’s not too far off, especially given the low sample size.

Within the training sample, roughly half the group regularly struggled to use the systems that even I would consider basic, like the Windows search function, refreshing a website or changing the sound output device. Among the others, many displayed poor security practices like clicking on unclear links, just filling in credentials in websites with little assurance beyond broad context that they were legitimate, and although I was assured they had strict Internet controls, I experienced little to no limitations on my surfing (not that I seriously tested the edges) so being served malware is completely possible.

I don’t want to paint too negative a picture. It’s not that the people were unusually stupid – they were often very savvy in other areas and picked up other aspects of the work pretty quickly, including nuances I struggled with. My point is that this was not a high-technical-ability group.

So when I was reflecting on how they could improve their system to be less insecure and more privacy-friendly, I quickly realised most of the (to me) pretty basic things were not feasible either in a corporate environment, or without limiting the majority of the population due to technical limitations. For example: Chrome was the de facto default browser used. Notoriously privacy-unfriendly, I immediately thought they could use Firefox instead (or others, but I’m trying to keep it somewhat mainstream here. I don’t care about your obscure browser using some protocol that nobody has ever heard of and doesn’t work with 99% of the Internet anyway – the grandmother test is in full effect here; at least one of the trainees was a literal grandmother.

But they couldn’t, because key software literally only works in Chrome. They would have to rewrite it, from scratch, to accommodate other browsers, and that would inevitably make the software less stable and increase maintenance costs and effort – they had to do so not too long ago when Internet Explorer was retired and they’re still adjusting from that. Remember, there are literally cases where life and death depends on this software working perfectly. The margin for error here is extremely small. In addition, Chrome – for all its many flaws – is pretty easy from an end-user perspective, unless you’re trying to do something pretty weird, which lowers the barrier for entry significantly, which decreases training costs and user error.

The password thing follows a similar pattern. Yes, initially it looks like a system set up for just awful, awful password hygiene, but again, this is a context where you need to ensure as much worker-account security as possible without being able to use things like a password manager or hardware key or whatever. So the requirements basically involve long passwords with at least some resistance to brute forcing, even if they don’t change very often in a practical sense. Again, the workers aren’t people with advanced degrees who do memory-improving training for fun; these are middle-aged parents and retirees trying to make a living. Saving passwords in the browser and staying logged in across multiple sessions is not just common, it’s the default behaviour.

When I first encountered the system, I was first struck by it as a mess of corporate policies put in place by people who had heard some good ideas but didn’t know enough or care enough to have them filter down effectively. But after a few days, I began to see it differently, a compromise between the demands of security and privacy and the realities of a large corporation working with potentially very sensitive information and low-technical-skilled workers and very little margin for error.

Now, don’t get me wrong. I’m not saying we shouldn’t hold corporations accountable for their poor standards. Many – in fact, I’d say most – could and should do way, way better both in the amount of information they collect and hold (Optus, Meta) and how they protect it. Nor should we ignore the often deeply unethical ways they gather or use this information without our consent or knowledge, sometimes actively deceptively or illegally. Surveillance capitalism does demand ever-more-invasive means of gathering information, and the most benign result of that is ever-more-manipulative commercial and political advertising.

But I think this case study applies more broadly. See, I generally describe myself as not especially technically adept, and I do think that’s true. I know many, many people who are at least an order of magnitude more technically capable and knowledgeable than I am, who intuitively understand complex systems I can barely grasp the broad outlines of. But I am, from an objective standpoint, probably more technically capable than most; my home system dual-boots two operating systems, I’m putting together a home server, I’m comfortable working on the command-line interface for many tasks and reading at least basic technical documentation, and I can usually troubleshoot most of the problems I encounter.

Don’t get me wrong, all of those things are only the most basic form of those things; I dual-boot, but in the simplest possible way, one of the OS’s is Windows, and every time I have to fix it it takes me at least a couple of days of aggravation. I am comfortable with the CLI, but only on pretty basic and familiar stuff like updates. I can read documentation, but it takes me a long time to pull what I need out of them.

But I know that even one of those would make my parents or a lot of my friends completely lost within seconds. It’s not that it’s hard, so much as it’s a completely different set of skills that they’ve never had to develop or even think about developing before. It’s taken me multiple years to get to this level of competence, and it’s really not that impressive relative to most people I know.

True story: I was talking to someone whose technical skills I deeply, deeply respect a few days ago. Not about anything especially interesting, but I mentioned a study I’d read once (I can’t remember which one, but it was to do with how people struggle to differentiate faces of people from races other than their own). Not going into detail, just some very basic methodology stuff and what they found. They expressed surprise that I could just remember studies I’d read over a decade ago like that – not because they think I’m stupid, but to them that’s an amazing feat, a mark of high expertise and intellect. But to me, it was just a study I’d read a while ago and remembered because it was interesting.

The point being, we’re often blind to what we’re good at. It’s pretty easy for me to remember psychological studies, because I’m interested in them, I’ve read a lot, and I’ve built up a pretty good conceptual network to link them together and retrieve them. I’m often surprised when students struggle with this (to me) pretty basic task – they read the study last week and it’s on the topic we’re talking about at the moment. How can they not remember it? Are they stupid?

The obvious answer is no (usually). They’re just novices – literally, they’re learning the basics of techniques I’ve mastered to the degree that I don’t even consciously use them anymore. Not because I’m some kind of super-genius – there are many people who I know who leave my psychological knowledge both in general and in specific to shame – but I’ve just got many years ahead of them.

In addition, my home system is just that – it’s my home system. It honestly doesn’t really have to serve anyone other than me, and I’m the one who patched it together over a long time to suit my knowledge and use case. I suspect most people in the “privacy community” are similar – their systems are great… for them. Put someone else in front of it, and it rapidly becomes completely baffling.

The above user makes me cringe in many ways, but more to the point I’m sure their system (if it doesn’t implode from all the unnecessary redundancy) is perfectly intuitive for them to navigate. I wouldn’t begin to understand how to work it – not because they’re so much smarter than me, but because it’s not my system.

Shared systems, especially corporate systems, don’t have that luxury. They have to work for as many people as possible, with as low a margin of error as possible, while still trying to have some degree of control over access and the information they handle. Imagine if you called your bank, and they had to spend 10 minutes getting the system to do something basic. It’d be maddening, right? But we don’t blink spending double or triple that on our own systems – partially because we can usually address our own problems on our own schedule, but largely because we have that intimate familiarity with our systems so we understand why it’s refusing to load a given program, or at least a reasonable guess, and – and this is important – the ability to attempt to fix it ourselves rather than through someone else’s hands and eyes.

So what’s your point?

Every system inevitably has to serve multiple goals. My home system stores various types of personal information, connects to the Internet, serves as my primary word processing program, provides multiple forms of media both entertaining and educational, and several other functions. Most corporate systems have to do an even more complex constellation of tasks, many of which are contradictory to each other in terms of values (storing and retrieving information is contrary to the value of protecting that information from misuse, for example). In addition, they need to work with an extremely low error rate, be usable by people with quite low technical skills, possibly across wide geographical area, and be flexible enough to be updated to account for changing needs, contexts and security threats with minimal disruption to functioning.

So while we can and should hold corporations responsible for their many, many failings and misdeeds, and given the hostile context we exist within take as much control as we can of our information, I think we’re often too quick to condemn a lot of the systems the corporations use as just obviously bad. I mean, they often are, but without a good understanding of why something is the way it is or the trade-offs at play, you’re going to be sharply limited in your ability to fix that problem.