What is privacy?

In which I totally answer a very simple question.

In the privacy “community”, we spend an awful lot of time asking the question “is this private? Is this more or less private than that?” Occasionally we ask “is this private from this person/organisation?” which is a little bit more rigorous. But we very rarely stop to ask “what is privacy? What, at base, do we actually mean when we say we’re seeking this Thing?”

There’s been a few attempts to answer that question in various ways. Daniel Solove described it as a collection of things which don’t have any key aspects in common, but fall under a broad and somewhat arbitrary umbrella of controlling access (be it manipulative access or simple informational access) to our bodies or mind or history or whatever. Plato defined privacy as being able to hide from the watchful eyes of society as a whole, with inevitable corrupting effects. But I’m neither a philosopher nor a legal scholar – my field is psychology, so what does psychology have to say about this?

Turns out… not a whole lot. Not due to lack of interest, but – in part – as a result of how science works, and in part because of what function privacy serves.

What is grounded theory?

So, the basic idea of science is that we look at the world, come up with a guess on how it works, then we test that guess. We then change the guess based on what we observe, refining it and testing it more rigorously and coming to a deeper understanding of why it’s the way it is. You all learned this in school, I’m sure.

However, unsurprisingly, there’s a bit more to it than that.

That process is notoriously error-prone, from bad testing methods (e.g. sampling bias) to bad analysis (e.g. extrapolating from a small or non-representative sample). That’s all a very interesting and complicated field that I really think more people should be more familiar with, especially given the replication crisis happening across basically all of science. However, there’s a more subtle and nuanced aspect that I need to quickly touch on: how you come up with that theory in the first place.

This is surprisingly controversial. If you come up with a theory before gathering data, then you risk collecting data that confirms your theory (consciously or unconsciously). We’ve seen this from too many theories to count. But also if the data does not support your theory, then it’s really tempting to add on tiny little changes to the theory. This was probably most famous with the geocentric model of the universe – when observations of certain planetary bodies did not match the predictions, the theory was adopted to include things like “epicycles”, where the planets orbited something something that in turn orbited the Earth, like the moon orbits Earth which orbits the Sun. This eventually leads to a incomprehensibly complex theory filled with caveats and exceptions, which eventually is replaced by a simpler theory, which then collects complexities in turn. This process isn’t even necessarily wrong – you are supposed to change your theory to fit the evidence – but the point is that it’s not necessarily obvious how to change the theory, or at what point you throw the whole thing out.

But if you don’t come up with a theory first, how can you say that the data you collect is relevant? For example, I want to know why tree leaves are green, so I get a thermometer and measure the leaf temperature as opposed to the trunk temperature. I find that the leaves are generally cooler than the trunk, and therefore conclude that the leaves are green because they’re cooler. Now, if I go on to more rigorously test this theory, eventually it should prove wrong and I should converge on the true answer, but that can take a really, really long time. Fun fact, although we could see bacteria in the late 1600s, the first solid evidence that they caused disease wasn’t until the early 1800s, and it didn’t become the dominant view for several decades after that, and even now a lot of the protocols we have around disease draw instead from miasma theory, albeit with a germ theory costume. In psychology, the MBTI is still around even though it’s (almost) entirely nonsense. Science might trend towards the truth, but if it does it does so very, very slowly.

And even if the data you collect is relevant – and this is really important to psychology – how do you analyse it? Let’s say I’m looking to describe personality, and I don’t want to take a previous theory with their biases and epicycles, I just want to see what’s there. What does the data say? I go around and ask a whole bunch of people – a large sample that is representative of the population that I care about – after that, what do I have? A bunch of words people have used. Without a previous guiding theory, how do I derive any meaning from them? Do I look at the most common words used? Do I try to create categories in the data based on the words? Do I subdivide the data by demographic information (e.g. race, gender, age, income)? Do you use a complicated and notoriously subjective statistical test to come up with one of the best measures of personality we have so far?

The important point is not that either of these approaches are bad, they’re not. Both are extremely valid in the right context, and we need both in order to come to a decent understanding of the universe we live within, especially if what we’re looking at (in this case, people) changes over time. But also both have drawbacks that you need to be aware of, and try to mitigate these.

The theory I want to talk about today is one of the latter types, what we call ‘atheoretical’ approaches – grounded theory. Grounded theory, put insultingly simply, means you gather a bunch of data (usually from talking to people), then basically look at it and the theory “emerges” from the data, if you’ve done the gathering properly. It’s not suitable for all questions, of course, but if you’re trying to get a broad overview of a topic, it’s pretty good. Such as, “what is privacy, psychologically speaking?”

What is privacy?

Much, although not all, of this post will derive from this dissertation. Partially because it’s a good body of work that I plan to unabashedly rip off for my own work, and partially because it’s one of the few I could find examining privacy from a psychological perspective using a grounded theory approach.

Disclosure

One thing they did that I found quite interesting was that they ended up drawing parallels between “privacy” and “disclosure”. In one sense this is obvious – if I tell people a piece of information, I’m giving up the privacy around that information to at least some extent. But they allude to a more nuanced relationship:

“Self-disclosure has been defined very loosely as the verbal sharing of information, but Fisher (1984) questions such an inclusive definition. He believes that what has been traditionally described as self-disclosure is in fact a broad class of information sharing behavior, not all of which should be labeled self-disclosure… Self-presentation is believed to be different from self-disclosure because the information that is shared is for the purpose of creating a certain image… This process of self-presentation also serves to create an idealized version of ourselves, which may not be true for self-disclosure.”

So, in short, how does untruthful or misleading disclosure factor in? Because by sharing something true but irrelevant about myself, I may actually preserve my ability to hide other information, either through implication or distraction. For example: if a person’s partner asks them if they’re cheating on them, the person can respond with “I’m asexual, what do you think?” which implies that they’re not cheating (because asexual people don’t experience sexual attraction), but does not actually rule it out (the person may be being emotionally unfaithful, or engaging in sexual behaviour for reasons other than sexual attraction which might count as cheating in their relationship). So the person discloses that they’re asexual (although presumably their partner already knows this) in order to hide the other information.

Or in a less immoral example, a person who is asexual but not “out” may make reference to their romantic partner to other people, leading those people to assume that the person is allosexual (that is, someone who does experience sexual attraction), thus preserving their privacy regarding their sexual orientation by relying on people’s assumptions. This has a history in the concept of so-called “beards” or decoy partners for homosexual people to appear heterosexual to a potentially hostile public.

In addition, disclosure can reduce privacy with one person, but that person may then go on to help preserve the privacy more generally. So a person might tell their friend that they’re having an affair, and the friend helps to cover it up, thus preserving the person’s privacy with everyone else (including the partner).

So clearly “disclosure” and “privacy” are not simply opposed forces, but in fact have a complex relationship and function depending on whether the disclosure is true or misleading. We see this in actual behaviour regarding “disinformation” – where you put in false information where disclosure is made mandatory but may not actually be necessary for a functional relationship (e.g. Netflix does not need my actual home address, so if they made it mandatory you could put something false and it would work as well while preserving your privacy in this regard).

Information control

This is not a new perspective to anyone who has spent any time looking at any philosophical or legal examination of this concept, the idea of privacy as the ability to control disclosure or concealment of aspects of the self.

This work lays out an interesting way of thinking about it, by examining what the idea of this control being broken. In their “privacy invasion cycle”, the person goes through the following stages:

TRUST: Basically, the person views their broad situation as more-or-less secure, or at least that they have a broad sense of the risks and dynamics at play given their environment. People don’t go about consciously evaluating things all the time, that’d be exhausting – we see this with people who have severe trauma, it’s call hyper-vigilance and it is, by all accounts, not a fun time and not great for your mental health. So while people aren’t blindly trusting or naive (usually), they don’t go overboard.

REALISATION AND RESPONSE: When we realise that our trust/privacy has been violated, we further realise that the assumptions we based that trust on are incomplete or incorrect. We then adjust our assumptions or trust in some way – sometimes slight, by ascribing the violation to unusual context or situations, and sometimes drastically by deeming someone entirely untrustworthy.

Although the work does not specify this, it seems plausible that the adaptation can go the other way as well – when we perceive our privacy or trust has been upheld, we’re more likely to extend that trust in that situation. And when it’s violated, we’re less likely. This leads to a dynamic level of trust depending on the context we exist within.

Of course, there’s another variable there; confirmation bias. When we expect our trust to be violated, we’re less likely to extend that trust, and will interpret any evidence to support our existing viewpoint; “Oh, I knew I was right not to trust Bob to look after my cat, look who he voted for in the last election” would be an extreme but plausible example. In principle this could go either way, but since the consequences of our trust being violated tend to be more obvious and painful than trust being upheld, we’d expect lower levels of trust to be more stable.

And indeed, this is what we see in the privacy “community”. People perceive their trust or privacy being violated (even potentially when it has not actually been), and they downgrade accordingly. But in so doing, they form a view that the world is fundamentally hostile to privacy (not without basis, in fairness), and as such they interpret all evidence in line with that hypothesis. Combine this with a tendency to read more information regarding privacy violations (because when a safeguard works it’s rarely noticed, and research papers saying “hey, turns out AES is pretty good” don’t get nearly as much attention, assuming they can actually get published), and a social tendency towards rewarding more extreme approaches, and you end up with progressively lower and less trusting assumptions.

So in terms of actionable take-aways from this view, I would recommend when crafting your privacy solutions, ask yourself what assumptions are underlying your decisions, and whether you can justify them or not. Sometimes you can’t, and that’s not necessarily a bad thing (I can’t prove that Google is reading my e-mail), but it is very different to things that you can provide evidence for, and needs to be considered as such.

Privacy as identity

The last sub-point I wanted to cover from the dissertation is something I’ve wanted to talk about for a while but haven’t been able to properly express in words. I still can’t, but hopefully this will serve as a vague wave in that direction as a conversation-starter: the idea as being “private” as a kind of personality trait, or as an aspect of someone’s identity, often contrasted with being “open”.

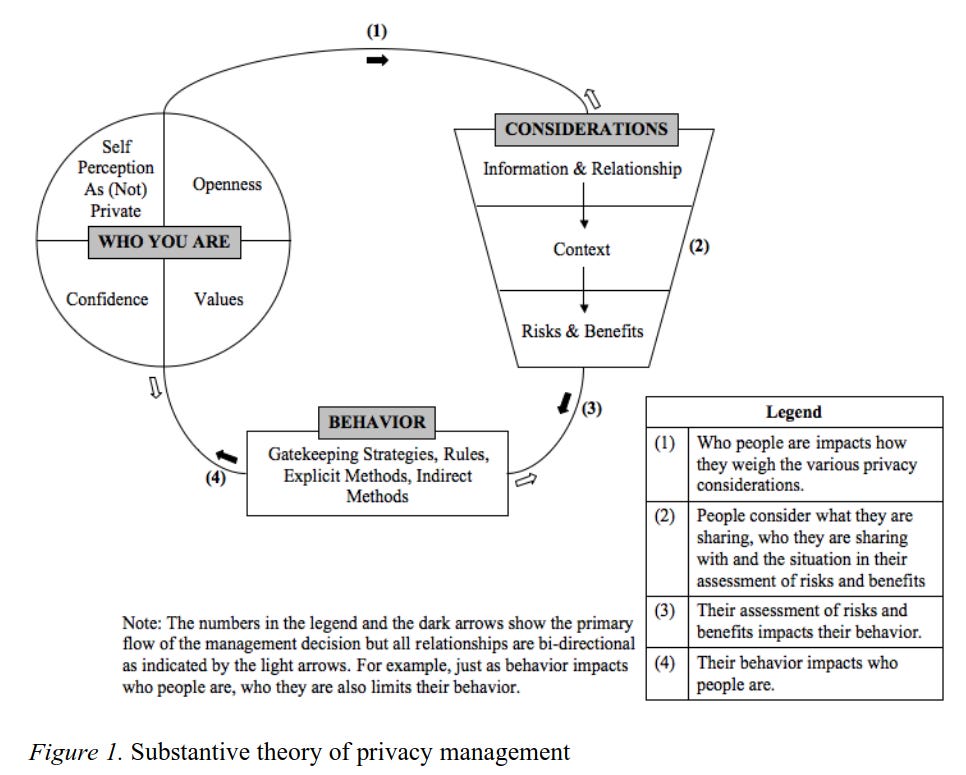

“Speaking about privacy with people often gave me the feeling that essentially I was asking them, “Who are you?”… When I asked participants what privacy meant to them or how they managed it, conversation seemed to turn to core elements of their personality and their approach to relationships. … It highlights two aspects of privacy that struck me through the interviews – privacy is something that is very personal in nature, and yet it remains, on the whole, an unexamined part of one’s personality. … There were several key aspects to people’s approach to privacy, including their perceived privacy, their level of openness, their sense of confidence, and their values.”

The piece talks about how some of the participants would describe themselves as “a private person”, often with some indication that this was viewed negatively either by the person themselves or society as a whole. Sometimes this took the form of reframing or mitigating the claim:

“I mean, I’m not, I’m pretty private and reserved, like I said, but at the same time I’m not really like hoarding any secrets or something. I just like to, I’d rather not share everything, I guess, like things that are kind of sort of trivial, I guess, but they still, I don’t know, I still sort of have them logged in my brain.”

Notice how the person distinguishes between being “private” and being secretive, and feels compelled to assure the listener that what they’re hiding is not that important or interesting. I think this is the broad attitude that a lot of people share, and is what people’s objections to the “nothing to hide” argument is rooted in.

These people tended to describe their privacy as a part of their personality, but importantly they tended to describe them as not a stable or global part of their personality. That is, that they were private in some areas some of the time, depending on the situation and their values etc.

They viewed their personality as the starting point, adjusted according to the broad context (e.g. their relationship with the people involved), and that suggested their actual behaviours.

But I want to emphasise an important point: the people spoken to did not view privacy as something that is achieved by certain behaviours, but something more innate, more akin to a personality trait. Often this was contrasted with being “open”, or willing to share information about themselves more freely:

“I’m also the kind of person who would be willing to share things with strangers. Whereas some people are very, more closed with that. So I think that it depends on who you are.”

So what is privacy?

Well, it turns out it’s complicated. News at 11, I know. Turns out the thing smart people have spent so long trying to define is hard to define.

This work tends to suggest two main ways of viewing privacy: subjective and identity-aspect.

The subjective perspective is basically emotional; you’re private if you feel that the important information about you is controlled and revealed only as you wish. Even if your identity has been stolen and your e-mail is basically public and every detail of your life is known and harvested, as long as you feel under control, then under this view you are experiencing privacy. And I honestly think there’s something to that – no privacy is absolute, no system is unhackable, not protection is infalliable or all-encompassing. And without meaning to gloss over the situation of people who are being stalked, or live under a hostile government or society, for most people in the privacy “community” this feeling seems to be what they’re seeking.

The identity-aspect part of privacy is the idea of privacy is who you are, it’s a part of your personality or self-concept. Maybe it’s the result of your experiences or just innate, but it’s not something you achieve using protective behaviours, it’s something you just are. You are a private person, so you don’t share personal information, and may be less likely to use disclosure-encouraging services like Facebook or Twitter or whatever. You’re not more private because you don’t use them, you don’t use them because you’re more private. And again, I don’t think this is entirely without merit – some people do seem more willing than others to talk about aspects of their lives. Some people I’ve known seem to enjoy talking about their sex lives in far too much detail, while others are very, very private about basically their entire personal lives.

And maybe that’s part of why psychology doesn’t have much to say about privacy – because it’s not really a state you can be in.